In the realm of statistics, few terms evoke as much dread and confusion as the "p-value." For many students embarking on their six sigma certification journey, the p-value feels like a mysterious gatekeeper: a mathematical enigma designed to make you feel like you missed a crucial day in high school.

But here is a secret: a p-value is actually a very simple concept wrapped in an intimidating name. You don’t need a PhD in mathematics to understand it; you just need to understand how "surprised" you should be by your data.

To help you master this essential tool for the Analyze Phase of any DMAIC project, we are going to break down the p-value explained simply, using analogies you actually use in real life.

The Surprise Meter: What is a P-Value?

Imagine you are sitting in a coffee shop. You’ve been told that the barista there is a master of "latte art" and never misses a design. You watch them make five coffees. On all five, there is no art: just plain foam.

How surprised are you? Probably quite a bit. Based on what you were told (the "claim"), the outcome you saw was very unlikely.

In statistics, the p-value is simply a numerical measurement of that surprise. It is the probability that the results you are seeing happened purely by random chance.

- A low p-value (typically ≤ 0.05) means your results are very surprising if we assume "nothing special is happening." It suggests that something real: a "root cause": is actually at work.

- A high p-value (> 0.05) means your results are not surprising at all. They could easily have happened by accident.

When you are working through Analyze Phase success criteria, the p-value is your primary tool for validating whether a suspected root cause is real or just a ghost in the machine.

The Courtroom Analogy: "Innocent Until Proven Guilty"

To truly grasp how a p-value works in a business setting, think of a courtroom trial. In the legal system, we have a "Null Hypothesis": The defendant is innocent.

To overturn this assumption, the prosecution must provide evidence so strong that it is "beyond a reasonable doubt."

In Six Sigma, the "Null Hypothesis" (H0) is the assumption that your process change did nothing, or that the factor you are testing has no impact on the outcome. For example: "Changing the supplier does not affect the defect rate."

The p-value represents the weight of the evidence against that "innocence."

- If the p-value is high (e.g., 0.60): The evidence is weak. There is a 60% chance the observed data could happen even if the defendant is innocent. We "fail to reject" the null hypothesis. The defendant walks.

- If the p-value is low (e.g., 0.01): The evidence is incredibly strong. There is only a 1% chance we’d see this evidence if the defendant were actually innocent. We "reject" the null hypothesis. We have found a statistically significant result.

In a professional environment, this keeps us from making expensive mistakes. It prevents us from "convicting" a process step (spending money to fix it) when it isn't actually guilty of causing the problem.

The Coin Flip: Is the Game Rigged?

Let's look at a classic example: a coin flip.

If I tell you a coin is fair, and you flip it once and get "Heads," you aren't surprised. The p-value for that event is 0.50. It’s a 50/50 shot.

But what if you flip it 10 times and get "Heads" 10 times in a row?

Now you’re suspicious. The probability of that happening by pure luck is roughly 0.001 (or 0.1%). That is a very low p-value. At this point, you would reject the "Null Hypothesis" (that the coin is fair) and conclude the coin is rigged.

In manufacturing, this might look like testing a new machine setting. If the new setting produces a "perfect" batch, is it because the setting is better, or did you just get a "lucky" batch of raw materials? Testing the p-value helps you decide. This is especially useful when you are dealing with outlier detection and treatment to ensure your "lucky" batch wasn't just a fluke in the data.

Why 0.05? The "Gold Standard" of Significance

You will often hear that a p-value of 0.05 is the magic number. This is called the "Alpha" level.

Why 5%? To be honest, it’s somewhat arbitrary. It was popularized by the statistician Ronald Fisher in the 1920s. Essentially, the industry has agreed that if there is less than a 5% chance that our results are a "fluke," we are willing to bet that they are real.

However, in high-stakes environments: like cold chain logistics or pharmaceutical manufacturing: a 5% risk of being wrong might be too high. In those cases, you might look for a p-value of 0.01 (1%) or even lower before making a change.

Common Pitfalls: What a P-Value is NOT

Because p-values are often taught poorly, many professionals carry misconceptions into their projects. Let’s clear those up:

1. It doesn’t tell you the "size" of the effect

A low p-value tells you that a difference exists, but it doesn't tell you if that difference is important. For example, you might find a new process that is "statistically significantly faster" with a p-value of 0.001. But if the speed increase is only 0.5 seconds on a 4-hour task, it’s not practically significant. You wouldn't spend $50,000 to implement a change that saves half a second.

2. It isn't the probability that you are right

A p-value of 0.05 does not mean there is a 95% chance your hypothesis is true. It simply means that if nothing were happening, you’d only see these results 5% of the time. It is a subtle but important distinction in the world of professional problem-solving.

3. It doesn't mean "No Change" if it's high

If your p-value is 0.15, it doesn't prove there is no effect. It just means you don’t have enough evidence yet to prove there is one. Maybe your sample size was too small. This is why properly scoping your measure phase is so vital: you need enough data to make the p-value meaningful.

Putting the P-Value to Work in Six Sigma

In a real-world Lean Six Sigma project, you’ll encounter the p-value most often during Hypothesis Testing.

For instance, if you are working on bottleneck identification, you might suspect that "Shift B" is slower than "Shift A."

- Step 1: State your Null Hypothesis (H0): Shift A and Shift B have the same speed.

- Step 2: Collect data on cycle times for both shifts.

- Step 3: Run a statistical test (like a T-test) in a tool like Minitab or SigmaXL.

- Step 4: Look at the p-value.

If the p-value comes back as 0.02, you have evidence that Shift B is indeed performing differently. You can now move into the Improve Phase with confidence, knowing you aren't just chasing a random variation in the data.

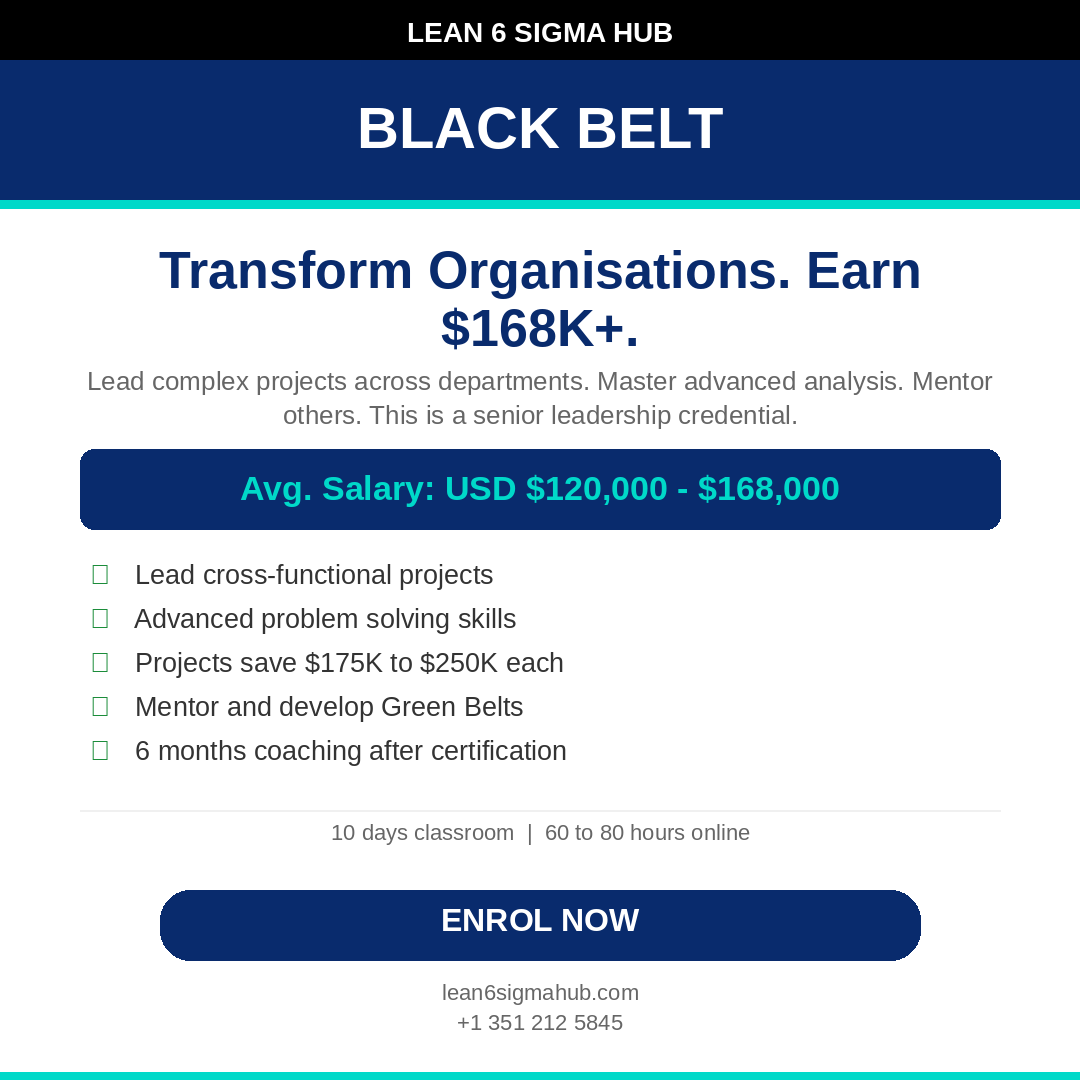

Why This Matters for Your Career

Understanding the p-value explained simply is more than just a trick to pass an exam; it’s about becoming a data-driven leader. In the modern workplace, "I think" and "I feel" are being replaced by "The data suggests."

When you can walk into a boardroom and explain why a process change is necessary: backed by a p-value that proves the root cause: you shift from being a "manager" to being a "problem solver." This level of analytical depth is what separates a Yellow Belt from a Black Belt.

Whether you are looking to optimize hybrid workforce productivity or trying to reduce batch sizes in manufacturing, statistical significance is the compass that keeps your project on track.

Summary: The P-Value Cheat Sheet

- P < 0.05: The result is "Statistically Significant." Something is happening. Reject the status quo.

- P > 0.05: The result is "Not Significant." It could be random noise. Stay the course or get more data.

- The Goal: To move from guessing to knowing.

Statistics don't have to be a headache. Once you view the p-value as a "Surprise Meter," the math fades into the background, and the insights move to the front.

If you’re ready to stop guessing and start leading with data, take the next step in your career. Explore our Six Sigma Certification programs and master the tools that drive global business excellence.