In the realm of process improvement, the Analyse phase of the DMAIC (Define, Measure, Analyse, Improve, Control) methodology serves as the critical junction where theory meets reality. To the untrained eye, a process plagued by defects or delays appears as a chaotic jumble of variables. However, to a Lean Six Sigma practitioner, this phase is a crime scene, and the practitioner is the Data Detective.

The fundamental purpose of this phase is to move beyond mere observation and into the territory of objective proof. To achieve this, one must master a specific trio of analytical methodologies found within our lean six sigma training materials: Stratification (Chapter 19), Inferential Statistics (Chapter 20), and Hypothesis Testing (Chapter 21). By integrating these tools, an organization can transition from subjective "gut feelings" to data-driven certainty, ensuring that the root causes identified are not just correlations, but the actual drivers of inefficiency.

Stratification: Slicing the Data to Reveal the Suspect (Chapter 19)

In any investigation, the first step is to organize the evidence. In the context of Lean Six Sigma, this is known as Stratification. As detailed in Chapter 19 of our curriculum, stratification is the process of partitioning data into distinct groups or layers to uncover patterns that might otherwise be hidden in a large, aggregated dataset.

When a process is underperforming, the overall data often represents "noise." For example, if a manufacturing line has a 12% defect rate, looking at the total output tells us very little about why the defects occur. To fully appreciate the power of stratification, the Data Detective must slice this 12% by various factors:

- By Shift: Are defects higher during the night shift compared to the morning shift?

- By Machine: Is one specific piece of equipment producing 80% of the errors?

- By Material: Does the defect rate spike when using a specific vendor’s raw materials?

- By Operator: Is there a training gap among newer employees?

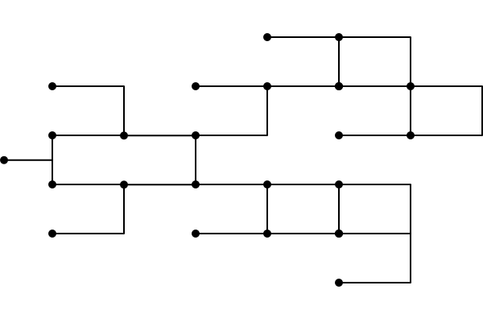

By applying stratification, we narrow the "suspect list." If the data reveals that Machine B on the second shift accounts for the vast majority of the variance, we have successfully isolated the area of interest. This technique is often the precursor to more advanced root cause tools, such as the cause-and-effect-diagram, which helps visualize the potential inputs (Xs) leading to the output (Y).

Inferential Statistics: Moving from Clues to Conclusions (Chapter 20)

Once stratification has helped us identify potential suspects, the Data Detective must determine if the patterns observed are representative of the entire population or merely a result of random chance. This brings us to Inferential Statistics, covered in Chapter 20 of the Lean 6 Sigma Hub materials.

While descriptive statistics summarize the data we currently have, inferential statistics allow us to make "inferences" or predictions about a larger population based on a sample. In a high-volume environment, it is often impossible or cost-prohibitive to measure every single unit produced. Therefore, we rely on sampling.

The cornerstone of this logic is the Central Limit Theorem. This theorem posits that as sample size increases, the distribution of the sample means will approximate a normal distribution, regardless of the population’s original distribution. This allows practitioners to calculate Confidence Intervals: a range of values that likely contains the true population parameter.

For instance, if a Data Detective samples 100 invoices and finds an average processing time of 45 minutes, inferential statistics allow them to state with 95% confidence that the entire population's average processing time falls between 42 and 48 minutes. This transition from "what happened in our sample" to "what is happening in our process" is vital for any professional seeking lean six sigma certification. It ensures that the project team is not chasing "ghosts" created by sampling error.

Hypothesis Testing: Proving the Root Cause (Chapter 21)

If stratification identifies the suspect and inferential statistics provide the evidence, Hypothesis Testing (Chapter 21) is the formal trial where the root cause is either convicted or acquitted. This is the most technical aspect of the "Data Detective" workflow, requiring a disciplined approach to statistical significance.

A hypothesis test begins with two competing statements:

- The Null Hypothesis (H0): The status quo. It assumes that there is no difference or no relationship (e.g., "The change in machine settings does not affect the defect rate").

- The Alternative Hypothesis (Ha): The detective’s theory. It assumes there is a significant difference (e.g., "Machine B produces significantly more defects than Machine A").

The objective is to determine the P-value. In the realm of Six Sigma, a P-value less than 0.05 (the standard alpha level) indicates that the observed difference is highly unlikely to have occurred by chance. If the P-value is low, the Data Detective "rejects the Null Hypothesis" and concludes that the factor in question is a statistically significant root cause.

Common tests utilized in this phase include:

- T-Tests: To compare the means of two groups (e.g., Shift 1 vs. Shift 2).

- ANOVA (Analysis of Variance): To compare the means of three or more groups.

- Chi-Square Tests: To determine if there is a relationship between categorical variables (e.g., Vendor Name and Pass/Fail status).

To see these principles in action, practitioners often review a LSS Black Belt sample project, which demonstrates how complex hypothesis testing is documented and presented to stakeholders.

The Integrated "Data Detective" Workflow

To illustrate how these Chapters (19, 20, and 21) function together, consider a hypothetical case study involving a customer service call center experiencing high "Average Handle Time" (AHT).

- Stratification (The Initial Scan): The Data Detective stratifies the AHT data by call type, agent experience level, and time of day. They discover that "Technical Support" calls for "Product X" are 30% longer than any other call category.

- Inferential Statistics (Validating the Sample): The detective takes a random sample of 200 Technical Support calls. Using the free Six Sigma calculator, they determine the confidence interval for the mean AHT. The data confirms that the sample is a reliable representation of the thousands of calls handled monthly.

- Hypothesis Testing (The Verdict): The detective suspects that a recent software update (Factor X) is the cause. They run a T-test comparing AHT before the update and after the update. The resulting P-value is 0.002. Since 0.002 is much less than 0.05, the Null Hypothesis is rejected. The detective has proven, with statistical certainty, that the software update is the root cause of the increased handle time.

Without this structured approach, the team might have wasted weeks retraining agents or hiring more staff, when the actual solution required a technical fix to the software interface.

Why Technical Mastery Matters for Certification

Acquiring a lean six sigma certification is not merely about learning definitions; it is about developing the analytical rigor to solve complex business problems. Employers value Black Belts and Green Belts precisely because they possess the "Data Detective" skillset. They do not guess; they prove.

The transition from the Analyse phase to the Improve phase is only successful if the root causes are accurately identified. If the detective fails to use stratification, they may miss the specific "pocket" of poor performance. If they ignore inferential statistics, they may react to a one-time anomaly. If they bypass hypothesis testing, they lack the objective proof needed to justify the investment in an improvement solution.

For those currently studying or looking to refresh their knowledge, utilizing resources such as Six Sigma flash cards can be an excellent way to internalize the differences between various statistical tests and their applications.

Conclusion: Solving the Mystery of Process Variation

The "Analyse" phase is where the most significant breakthroughs in a Six Sigma project occur. By utilizing stratification to narrow the focus, inferential statistics to validate findings, and hypothesis testing to confirm root causes, you transform from a process observer into a Data Detective.

This disciplined approach ensures that your efforts in the Improve and Control phases are targeted, effective, and sustainable. Whether you are leading a hypothetical project or a multi-million dollar corporate initiative, these statistical tools are your most reliable allies in the pursuit of operational excellence.

If you are ready to master these techniques and advance your career, it is time to validate your expertise. Test your current knowledge with our free lean six sigma black belt practice exam and see if you have the instincts of a true Data Detective.

Take the next step in your professional journey and enroll in an accredited program today to earn your lean six sigma certification.