In the realm of operational excellence, there is a dangerous delusion that plagues boardrooms and production floors alike: the belief that all data is inherently "truth." Organizations claim to be data-driven, yet they frequently ignore the foundation upon which that data is built. If your measurement system is flawed, your data is not information: it is fiction. It is magic. And in a professional environment, relying on magic is a fast track to failed projects and hemorrhaging capital.

To truly master process improvement, one must first confront a hard reality: Measurement system error is inevitable. Every time you take a measurement, the value you record is a combination of the true value of the part and the error introduced by the system used to measure it. Without a robust Measurement System Analysis (MSA), you are making billion-dollar decisions based on a coin flip.

The Fundamental Purpose of MSA

The fundamental purpose of Measurement System Analysis is to ensure that the data you collect is consistent, reliable, unbiased, and correct. In the Lean 6 Sigma Hub glossary, MSA is defined as a mathematical method of determining how much variation within a process is contributed by the measurement system itself.

Before you ever attempt to calculate a process capability index or run a Shapiro-Wilk test for normality, you must validate your gauges. If your measurement system accounts for more than 30% of your total observed variation, your process improvement efforts are dead on arrival. You aren't fixing the process; you're chasing ghosts created by your own measuring tapes, scales, or software.

The Anatomy of Measurement Error: Accuracy vs. Precision

To fully appreciate the complexity of MSA, one must distinguish between the two primary categories of measurement error: Accuracy and Precision.

1. Accuracy (The "Location" of Data)

Accuracy refers to how close your measurements are to the "True" or "Master" value. In a professional MSA, we break accuracy down into three critical components:

- Bias: The difference between the average of the measurements and the reference value. If your scale consistently weighs items 2 grams heavy, you have a bias problem.

- Linearity: Does your measurement system perform consistently across its entire range? A thermometer might be accurate at 100°C but wildly off at 0°C.

- Stability: This is the change in bias over time. If your measurement system drifts throughout the day, your data integrity is compromised.

2. Precision (The "Spread" of Data)

Precision, often evaluated through a Gage R&R (Repeatability and Reproducibility) study, focuses on the consistency of the measurements.

- Repeatability (Equipment Variation): The variation observed when the same appraiser uses the same gauge to measure the same part multiple times. If the numbers jump around, the equipment is likely the culprit.

- Reproducibility (Appraiser Variation): The variation observed when different appraisers use the same gauge to measure the same part. If "Operator A" consistently reads higher than "Operator B," you have a training or standard work problem.

Gage R&R: The Litmus Test for Continuous Data

In the Measure phase of any DMAIC project, the Gage R&R study is your most powerful tool for continuous data. To perform a statistically significant study, the industry standard requires a specific protocol: 10 representative samples, 3 appraisers, and at least 2 or 3 trials per appraiser.

The results of a Gage R&R study are typically expressed as a percentage of the total process variation or a percentage of the tolerance. The benchmarks for success are non-negotiable:

- Under 10%: The measurement system is acceptable. You can trust your data.

- 10% to 30%: The system is marginal. Depending on the criticality of the metric and the cost of improvement, it may be accepted temporarily, but root cause analysis is advised.

- Over 30%: The system is unacceptable. Stop the project. Do not pass a tollgate review. Fix the measurement system before proceeding.

The "Magic" Trap: Why Your Results are Probably Biased

Many professionals claim to have "checked" their data, but they fall into the trap of Repeatability or Reproducibility bias. These are the "magical" elements that make a measurement system look better than it actually is.

Repeatability Bias occurs when an appraiser is asked to measure the same part twice in immediate succession. Human nature dictates that they will remember the first result and subconsciously (or consciously) repeat it. This creates an illusion of high precision. To combat this, trials must be separated by time and randomized. The appraiser should not know which sample they are holding.

Reproducibility Bias happens when operators know they are being tested. They suddenly follow the Standard Operating Procedure (SOP) perfectly for the duration of the study, only to return to "the way we've always done it" the moment the Six Sigma Black Belt leaves the room. This is why proper process documentation and blind studies are essential for capturing reality.

The Financial Consequences of Bad Data

Ignoring MSA isn't just a statistical oversight; it is a financial disaster. When you rely on untrustworthy data, two things happen, both of which increase your Cost of Poor Quality (COPQ):

- Type I Error (Producer’s Risk): Your measurement system tells you a good part is bad. You scrap or rework perfectly functional products, wasting materials and labor.

- Type II Error (Consumer’s Risk): Your measurement system tells you a bad part is good. You ship defective products to the customer, leading to returns, warranty claims, and a decimated brand reputation.

Consider a hypothetical case where a manufacturer of precision medical components bypasses MSA. Their gauge has a 35% error rate. They "adjust" their process based on these readings, inadvertently moving the process mean away from the target. The result? A 15% increase in scrap rate, totaling $250,000 in annual losses: all because they trusted a $500 gauge that hadn't been validated. This is why tools like the Project Charter ROI Calculator are vital for illustrating the stakes involved in data integrity.

Implementing a Robust MSA Protocol

To stop relying on magic and start relying on math, follow these non-negotiable steps:

- Select the Right Samples: Use 10 to 20 samples that represent the entire range of process variation. Do not just pick "good" parts.

- Ensure Independence: Appraisers must perform their measurements independently. Use a "blind" study format where they cannot see each other's results or their own previous results.

- Check for Stability First: Ensure your gauge is calibrated and stable over time before running a full Gage R&R.

- Analyze the "Number of Distinct Categories" (ndc): A good measurement system should be able to distinguish between different parts. If your ndc is less than 5, your gauge lacks the resolution needed to see the process variation.

- Document Everything: Use lessons learned documentation to ensure that the measurement standards established during the study are maintained long-term.

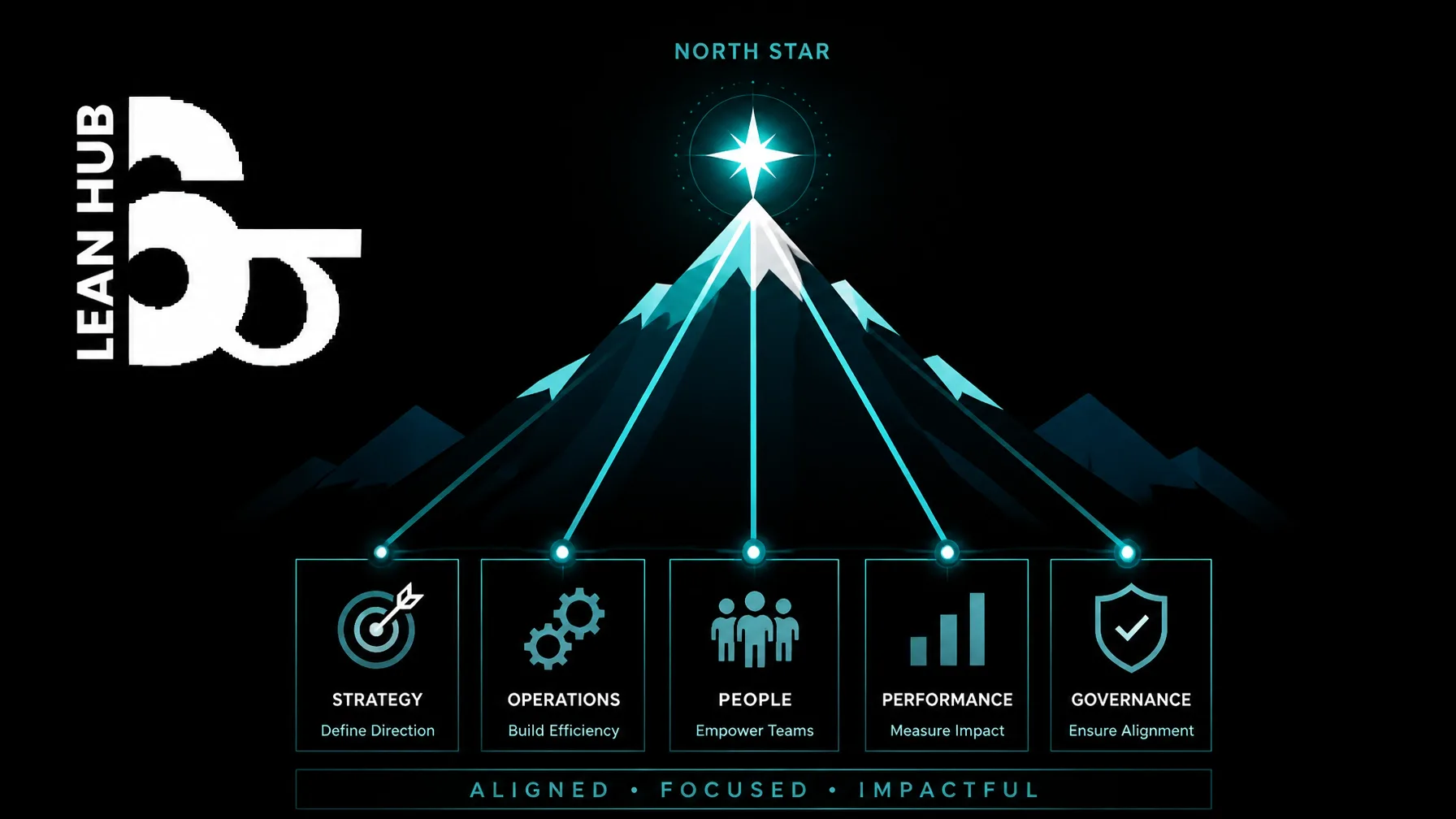

From Measurement to Mastery

Data is the lifeblood of Lean Six Sigma. Whether you are building a CTQ Tree or performing process mapping in the Measure phase, the validity of your output is capped by the quality of your input.

The difference between a "management by gut feeling" culture and a true Six Sigma organization is the refusal to accept data at face value. A seasoned professional treats every data point with healthy skepticism until the measurement system has been statistically proven. MSA is the barrier between professional-grade analysis and amateur guesswork.

If you are ready to stop guessing and start delivering results that actually impact the bottom line, you need to master the technical rigor of Measurement System Analysis. It is the only way to ensure your "quick wins" don't turn into long-term liabilities.

Take control of your data integrity and advance your career by enrolling in our accredited certification programs.